What if You Could Control Reality With a Single Touch?

A project exploring line-of-sight interaction with the digital world.

A project exploring line-of-sight interaction with the digital world.

I wanted to create a wireless touch sensitive headband, which allowed for line-of-sight interaction with smart home devices i.e. you could look at a light switch and tap your headband to turn it on. Then you swivel around to look at the ceiling fan and swipe backwards on your headband to reduce its speed — this was my vision.

Humans have limbs which allow for flexibility, mobility and 360 degrees of freedom. And yet, in our day to day lives, we find ourselves confined into small screens, interaction being closed off to moving a mouse pointer in two dimensions, and not using our full potential.

Throughout the last decade, there have been many experiments into the field of Human-Computer Interaction, such as the fascinating SixthSense concept, and with developments in virtual and augmented reality, this is starting to change.

I wanted to build on this, with the aim of exploring different modes of interaction with the digital world around us — the simplest being, the smart home. The rise of the Internet of Things has given us a wide range of devices dotted around our homes.

From this, an opportunity arises to explore various modes of interaction with these devices. Instead of controlling these devices from a mobile phone or a simple wall switch, I wanted to look at other possibilities and thus, the smart headband was born. I am interested in human-computer interaction as well as cool tech so from such an open project, there was great scope for development in various aspects of product design.

Interaction with smart home devices, must require some form of wearable technology, and I wanted to focus on the product design aspect, as well as the technical details, as these go hand in hand in the real world. Along with other sources, I mainly drew ideas from an article about intuitive design from the Interaction Design Foundation. This greatly helped me to understand the key aspects of designing products for users, and led me to think from the user’s perspective, when considering wearable technology.

With a better understanding, I was able to thoroughly analyse products on the market or prototypes that have been developed. This allowed me to list pros and cons of each product, from a design and user experience viewpoint, followed by key takeaways I would try to incorporate into my own design.

One of the most fascinating ones was the SixthSense concept, unveiled at a TED talk in 2009. Although the prototype was demoed under a controlled environment and was not a commercially viable product at the time, it gave a captivating glimpse into the future of human-computer interaction which would enable us with a greater degree of freedom.

With it being demoed in 2009, there has been great strides in bridging the gap between humans and the digital world, from rapid progress in virtual and augmented reality to initial developments in brain computer interfaces; the most well known one being Neuralink — a startup backed by Elon Musk, with the aim of implanting chips to create a direct link with our brains and the digital world. I explored this concept and its possibilities, in one of my previous articles.

Following the research, I brainstormed key attributes I wanted my product to have, for good design and user experience, before coming up with the final objectives.

I wanted to create a wireless touch sensitive headband, which allowed for line-of-sight interaction with smart home devices i.e. you could look at a light switch and tap your headband to turn it on. Then you swivel around to look at the ceiling fan and swipe backwards on your headband to reduce its speed — this was my vision.

It seemed doable with current off-the-shelf components so I set about working out the technical details, researching various components that were required to turn my idea into a reality.

Having a keen interest in the maker world, I had a general feel of what I would require, with the main component being the micro-controller.

Microncontroller — For this, I chose the ESP8266 chip as it is a low cost Wi-Fi chip with a full TCP/IP stack i.e. it could connect to local Wi-Fi for communication between modules and the hub.

Gesture Input — As for the gesture recognition, from initial research, it became clear that I required some sort of variable resistor, which changes resistance with position.

Wireless Communication — I chose MQTT due its low latency and ease of use, with libraries available to use on the ESP8266 chip.

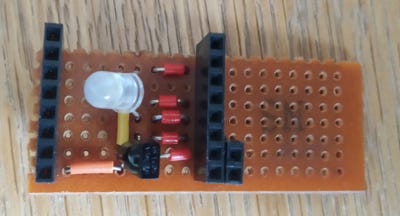

Device Selection — As I wanted line-of-sight interaction, infrared was the most straightforward option, with it being used day to day in our remote controls.

Although I did consider cameras mounted on the headband, which would detect ‘markers’ around the room, I realised that cameras on wearable devices are not a great choice as they raise privacy concerns, as well as increasing cost and complexity. This concluded the research side of the project, with a summary of key components I would require.

By thinking through the interaction process, I realised that every module would have the same functionality apart from the unique device controls, if and when selected. This system would not be sustainable or robust, which led to me creating a separate ‘hub’ that would be able to centrally control the smart devices, with 433MHz RF control and modules dotted around the house just being used for device selection. This draws parallels to how real world smart home systems operate.

While sketching out initial ideas, I bought the chosen components, from my previous research, and started development.

In the beginning, I decided to tackle the gesture recognition, as this was the key part of the system. With a potential divider, I reduced the 3.3V input to 0.76V as the analog input pin took a maximum of 1V. As resolution was not a requirement, this was not a problem. In order to create a simple user interface, I kept the potential interactions to a limit, with the only controls being tap, long tap, forward swipe and backward swipe.

During this time, I also realised that it is more robust and efficient to check and execute according to the internal system clock, instead of using the delay() function which pauses all processing — I faced this problem early on, due to processing and waiting for user input, to detect the correct gesture.

For the MQTT broker, it seemed easier and economical if it was hosted in the cloud, with a third-party service, such as CloudMQTT — from preliminary testing, there was no observable latency and messages were instantaneous.

As for IR control, this was relatively simple to achieve using an online wiring guide — I recorded and replayed my TV remote’s IR signals, for line-of-sight communication, which instantly gave me control of my TV without an additional module. For directing the IR beam, I stuck some tape around the LED.

Throughout the development, I learnt C++ for programming the micocontroller, as I had no prior experience with the language beforehand.

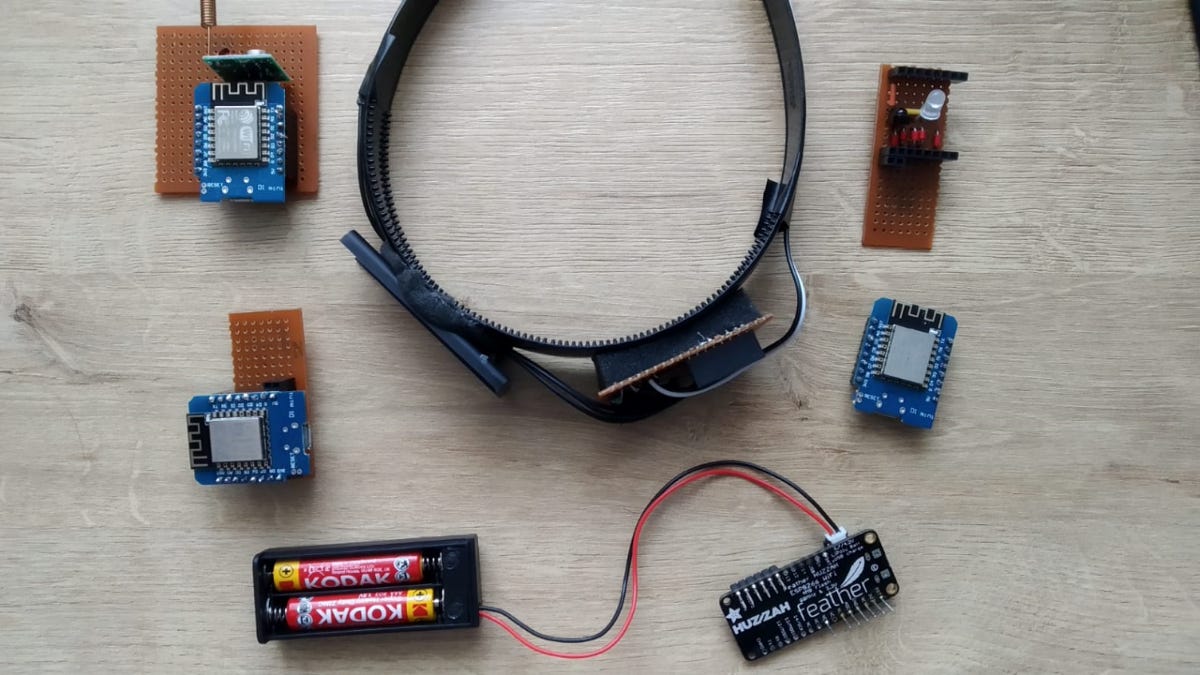

As the prototyping was done on breadboards, for a more permanent solution, I soldered the components onto spare stripboard, minimising the space used to reduce the overall size.

In terms of modelling, I made the decision to not go ahead with 3D modelling — the electronics turned out to be larger than I had imagined, and designing the casing for such a prototype would not efficient use of my time or money as the headband would be nowhere near the size of the prototype.

Instead, I bought a cheap plastic headband onto which I stuck on the electronics and battery pack. This turned out to be a fantastic decision and allowed me focus my time and energy on other development.

After a few months of intermittent hard work, I had finally finished the project. And here it is 👇.

For the proof of concept, I had created to selection modules and a ‘hub’ module, for controlling the devices.

Finally, it was time for testing. From basic functionality tests, this prototype offers a glimpse into what a similar future device could be like, and demonstrates a new mode of interaction with a digital world, through line-of-sight control.

My testing setup included a 433MHz RF controlled extension lead, to control a lamp with line-of-sight interaction, with the ‘hub’ module having been hooked up to a 433MHz RF transmitter to replay back the remote codes.

IR line-of-sight selection seemed to work reliably when there was around 2m distance between selection modules, which was quite good.

In conclusion, I believe this project was a success in demonstrating an alternative mode of interaction with the digital world and proving IR can be reliably used for line-of-sight selection. From testing and discussion with others, many people found line-of-sight interaction a fascinating concept, as well as the future possibility of such devices assisting those with less mobility.